When AI Doesn’t Fail — But the System Around It Does

- Sandeep Khuperkar, Founder & CEO, Data Science Wizards (DSW)

- Mar 31

- 6 min read

Why Enterprises Need to Govern AI Decisions, Not Just Build Models

Executive Summary

Over the past decade, enterprises have made remarkable progress in building artificial intelligence systems.

AI models now power fraud detection, underwriting, credit risk evaluation, supply-chain optimization, and many other critical operations. These models are increasingly accurate and capable.

Yet as AI systems move from experimentation into real operational environments, a different pattern is emerging.

Most AI systems don’t fail just because the model is wrong.

They fail because no one is governing how AI decisions evolve in the real world.

Understanding this distinction is critical for the next phase of enterprise AI adoption.

The Enterprise AI Paradox

Organizations have invested heavily in improving:

model accuracy

training pipelines

feature engineering

data infrastructure

As a result, AI models today perform remarkably well in controlled environments.

However, once these models operate inside real business processes, new dynamics emerge.

Customer behavior changes.

Products evolve.

Operational workflows shift. Regulatory policies change.

And when these environments evolve, AI decisions may drift in subtle ways even when model accuracy remains stable.

Operational workflows shift.

Regulatory policies change.

And when these environments evolve, AI decisions may drift in subtle ways even when model accuracy remains stable.

A Simple Example: AI Fraud Detection

Consider a bank using AI to detect fraud on credit card transactions. The AI system evaluates each transaction and decides:

Is this normal?

Or is this fraud?

Initially, everything appears to work perfectly.

The system catches fraudulent transactions.

Losses decline.

Reports show the model performing very well.

From every perspective, the AI system appears to be functioning exactly as intended.

So everyone believes the system is operating successfully.

Then Something Small Changes

Now imagine the bank launches a new mobile banking feature. Because of this update, customers begin using their cards differently.

Their behavior shifts slightly.

Their buying patterns change.

Transaction timing evolves.

Customer interaction with the app changes.

The AI model continues operating exactly as designed. It adapts automatically to the new patterns.

But not evenly.

For a specific group of customers - perhaps people in a particular city or demographic segment - transactions start getting flagged slightly more often.

Not dramatically more.

Just a little more than before.

And no one notices.

Why No One Notices

Many organizations monitor AI systems using aggregate performance metrics such as:

accuracy

precision

fraud loss reduction

And those metrics still look strong.

The dashboard continues to report:

“Everything is fine.”

From the perspective of model evaluation, nothing appears broken.

But underneath the surface, something subtle has changed.

Some customers are now:

getting more transactions blocked

receiving more fraud alerts

facing more friction in their daily banking experience

Even though they did nothing wrong.

The Model Did Not Fail

This is the key insight.

The AI model itself did not fail.

It continues detecting fraud according to the patterns it was trained to identify. The model is still functioning correctly.

The real problem is that the system around the AI was not monitoring how its decisions affect real-world behavior.

The monitoring system was only asking:

“Is the model accurate?”

But it was not asking:

how decisions evolve across different customer segments

how behavior shifts over time

whether decision pathways drift after product changes

So the model worked.

But the architecture responsible for governing the AI system failed.

What Actually Happened in the Example

Nothing “broke” in the model.

The model continued doing exactly what it was designed to do:

Identify patterns that resemble past fraud.

However, when customer behavior changed due to the new mobile app feature, some legitimate transactions began to appear slightly more suspicious according to the model’s rules.

As a result, the model flagged those transactions more often.

This does not mean the transactions were fraudulent.

It simply means the patterns now resembled fraud according to the model’s learned logic.

Technically speaking:

The model behaved correctly according to its training.

But the impact of its decisions changed in the real world.

Why the Model Was Still “Correct”

Most AI systems are evaluated using statistical performance metrics such as:

accuracy

precision

recall

fraud loss reduction

Suppose:

before the behavioral change: fraud detection accuracy = 94%

after the behavioral change: fraud detection accuracy = 94%

From a model evaluation perspective, nothing changed.

However, in practice, one customer segment might now experience 10–15% more false alerts.

The model remains statistically correct overall.

But behaviorally, its outcomes are now uneven.

This distinction is critical.

The Real Problem: AI Operating Without Governance

Traditional monitoring asks:

“Is the model accurate?”

But enterprises increasingly need to ask a different question:

“How are AI decisions affecting different users and workflows?”

AI systems do not operate inside static datasets.

They operate inside dynamic enterprise environments, including:

customer interactions

financial transactions

operational workflows

regulatory frameworks

And these environments constantly change.

When they do, AI decision behavior may drift in ways that traditional monitoring does not detect.

Where Governance Comes In

AI governance is not about correcting model predictions.

It is about controlling how AI decisions behave within enterprise systems.

Governance answers questions such as:

Are certain customer segments experiencing disproportionate outcomes?

Are decision patterns drifting after product or behavior changes?

Are AI decisions still aligned with business policies and regulatory frameworks?

Should thresholds or guardrails adjust when behavior patterns shift?

In other words:

Governance supervises the real-world impact of AI decisions.

A Simple Analogy

Consider a highway speed camera.

The camera accurately measures vehicle speed.

Now imagine the speed limit sign accidentally changes from 60 km/h to 40 km/h. Suddenly thousands of drivers begin receiving fines.

The camera is working correctly.

But the system governance around it has failed.

Someone should be monitoring:

how many drivers are being fined

whether rule changes created unintended consequences

This is not a sensor failure.

It is a system governance failure.

The Core Insight

The model did not fail.

The enterprise simply did not have a system governing how AI decisions evolve in production.

Such a governance system should monitor:

decision distribution

behavioral drift

segment impact

workflow consequences

What Governance Does in an AI Operating System

A properly designed AI governance system ensures that AI decisions remain:

traceable

fair across segments

aligned with policy

consistent with operational goals

It acts as runtime supervision for AI decisions.

The One-Line Explanation

The model predicts.

Governance watches how those predictions behave in the real world.

Why This Matters for Enterprise AI Architecture

As AI becomes embedded in critical business processes, enterprises require more than model development platforms.

They require systems that operate and govern AI at scale.

Traditional operating systems such as Linux, Unix, and Windows operate and govern compute infrastructure.

Similarly, enterprises now need a system that builds, operates, and governs AI.

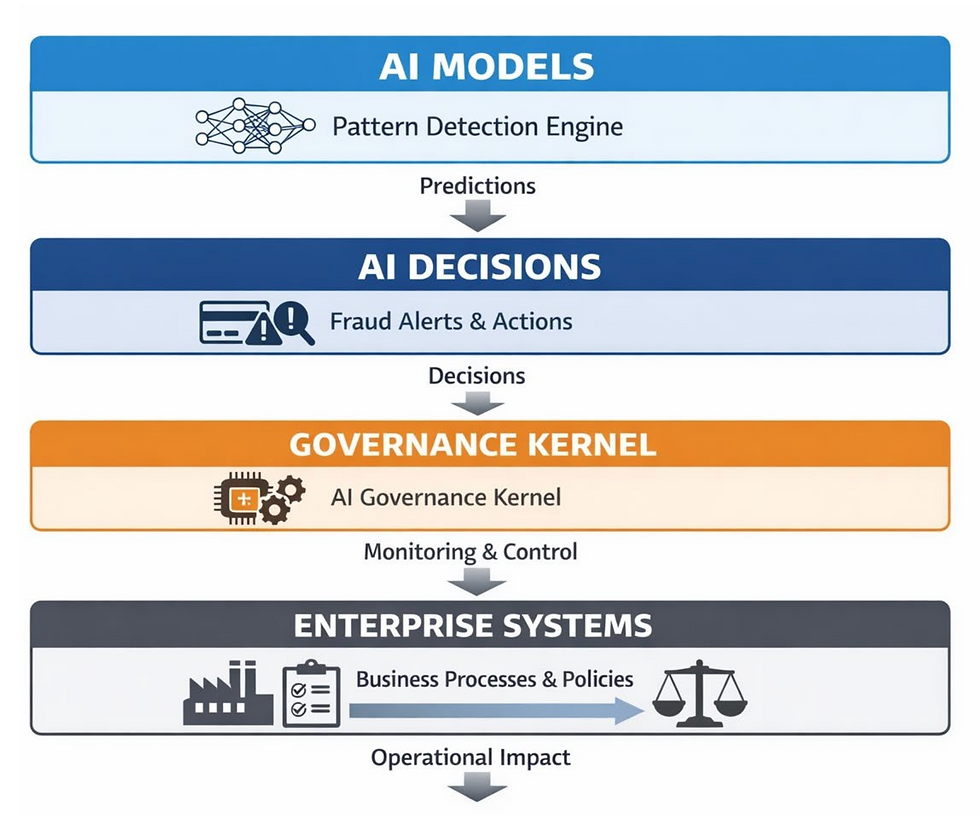

This is the architectural foundation behind:

DSW UnifyAI OS — The Enterprise AI Operating System

We are not replacing Linux, Unix and Windows operating system. They are the OS which enables to govern and operate compute as a system, Our DSW UnifyAI OS sits on top of that as layer which enables to govern and operate AI as a system.

A Kernel-First Approach to AI Systems

At Data Science Wizards, we believe AI governance must exist at the core system layer, not as an afterthought.

This is why DSW UnifyAI OS follows a Kernel-First AI system architecture.

Just as the kernel of a traditional operating system governs:

processes

resource access

system stability

The AI governance kernel inside DSW UnifyAI OS governs how AI systems behave in production.

It supervises:

model execution

workflow orchestration

policy enforcement

decision traceability

behavioral drift detection

lifecycle management

Governance therefore becomes part of the operating system itself, not a monitoring dashboard added later.

A New Question for Enterprise AI

As organizations scale their use of AI, they must move beyond asking:

“Is the model accurate?”

And begin asking:

“How are AI decisions behaving in the real world?”

Because the future of enterprise AI will not be defined solely by better models.

It will be defined by the ability to operate and govern AI safely at scale.

A Simple Way to Think About It

AI models answer:

“What is likely?”

AI governance answers:

“What should happen when the model says that?”

And answering that second question is precisely where an Enterprise AI Operating System becomes essential.

Author: Sandeep Khuperkar, CEO & Founder, Data Science Wizards (DSW)

Disclaimer: The opinions expressed within this article are the personal opinions of the author. The facts and opinions appearing in the article do not reflect the views of IIA and IIA does not assume any responsibility or liability for the same.